The most common AI story I hear from auditors

It goes like this.

Someone on the team, usually one of the more curious ones, the person who reads about this stuff and actually tries it opens ChatGPT during a busy engagement. They type something like “what are the key risks in a procurement process?” They read what comes back. It’s generic. It could apply to any organisation, any industry, any country. It tells them nothing they didn’t already know.

They close the tab. They go back to Word. And the conclusion they quietly reach, sometimes said out loud, sometimes just felt is: “AI doesn’t really work for audit.”

I’ve heard this story more times than I can count. And I understand it completely. The expectations were set by people who had something to sell. The demos were polished. The use cases were cherry-picked. The reality of sitting down with an AI tool in the middle of a real engagement, under time pressure, and getting something actually useful that part didn’t get nearly as much coverage.

But here’s what I want to say to every auditor who had that experience and walked away: the tool wasn’t the problem. The expectation was. This article is really about how to use AI in audit work in a way that actually delivers, which starts with rethinking what AI is for.

What we were told AI would do versus what it actually does

The version of AI that got sold to us, in the articles, the conferences, the breathless LinkedIn posts was something close to a replacement. A system smart enough to understand audit work, generate insights, and do the heavy lifting while we focused on the high-value thinking.

That version doesn’t exist yet. And trying to use AI as if it does is the reason most people end up disappointed.

What AI tools actually are right now, today, in a way that’s genuinely useful in audit work is the best research and drafting assistant you’ve ever had. Not an auditor. Not a replacement for professional judgement. An assistant. Exceptionally fast, available at any hour, and capable of handling the kind of work that slows you down without necessarily requiring your expertise, first drafts, document summaries, research briefs, language refinement, format conversion. (For concrete examples of where this actually saves time in practice, see our practical guide to AI in internal audit.)

The moment you stop asking AI to be an auditor and start asking it to be your assistant, everything changes.

This distinction sounds simple. In practice it requires a real shift in how you approach the tool what you ask it, how much context you give it, and what you do with what it gives you back.

The expectation gap in practice and what different looks like

Let me show you what this shift looks like with three examples from actual audit work, because abstract advice is easy to ignore.

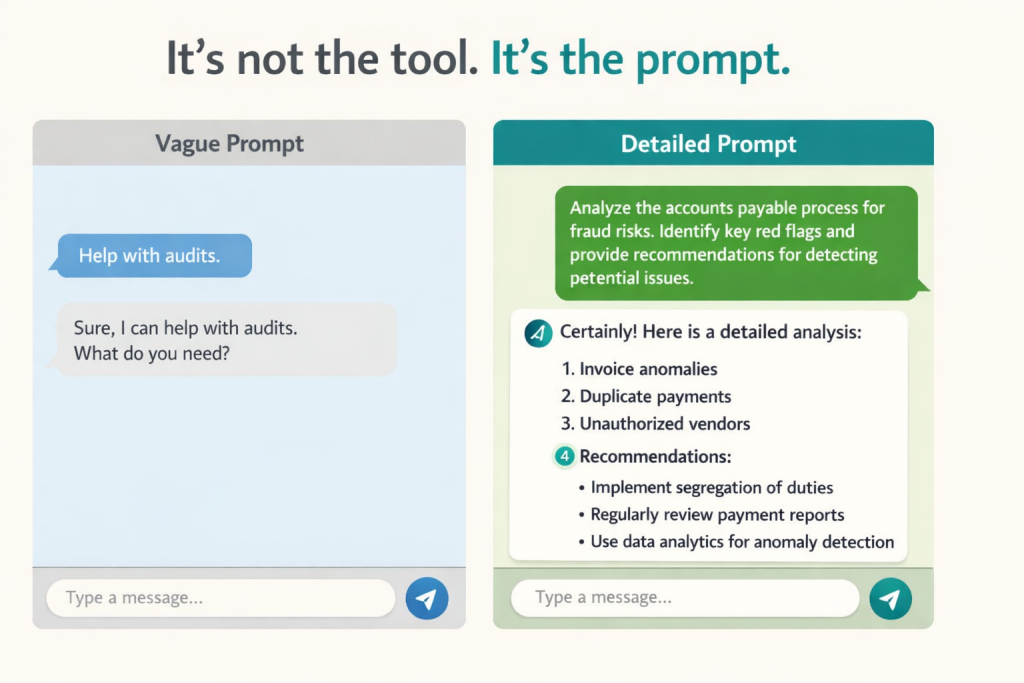

The risk assessment scenario. The common approach: open AI, type “what are the risks in a procurement process?” Get a generic list that could have come from a textbook. Feel underwhelmed.

The better approach: “I am an internal auditor reviewing the procurement process at a mid-sized NGO operating in Lebanon. The process covers purchase requests, three-quote requirements, approval thresholds, and GRN matching. We’ve had two findings in previous audits related to split purchasing and approval bypasses. Based on this context, what are the top five fraud and compliance risks I should prioritise in this audit cycle, and what is one key control to test for each?”

The output from the second prompt is specific, actionable, and genuinely useful as a starting point for your planning. Nothing changed about the tool. Everything changed about what you asked it.

The audit finding scenario. The common approach: ask AI to “write my audit finding.” Get something hollow that vaguely describes a control weakness in language that fits no one’s organisation. Spend more time editing it than it would have taken to write it yourself. Give up.

The better approach: paste your actual observation notes, what you saw, what the standard or policy says, what the root cause appears to be, and what the likely impact is if nothing changes. Then ask AI to structure this as an audit finding in IIA format with condition, criteria, cause, effect, and a specific, time-bound recommendation. The output will be close to publication-ready. You review it, adjust it, add your judgement on the rating. The hard part, getting professional language around your raw observations, is done.

The control assessment scenario. The common approach: ask AI “is this control effective?” Get a non-answer, because AI has no way to assess effectiveness without seeing the control operating. Feel like the tool is useless for anything technical.

The better approach: describe the control in detail, what it’s designed to do, how it operates, who is responsible, what the frequency is and ask AI what weaknesses an experienced auditor might look for in a control of this type. You’ll get a useful list of things to probe during testing. Not a conclusion, a set of questions. That’s exactly what you need at that stage of the work.

In every case the tool is the same. The prompt is different. The output is completely different.

The one shift that changes everything

What these examples have in common is specificity. The more context you give AI, the more useful the output. This sounds obvious when you say it out loud of course a more detailed question gets a more relevant answer. But in practice, most people open AI the way they open Google. A short phrase. A general question. And AI is not a search engine. (And as auditors, knowing how to use AI in audit means also understanding the governance side of AI for auditors, not just the productivity side.)

There’s a simple formula I use that changed how I approach every AI interaction in audit work. It has four parts: Role, Task, Context, and Format.

Role: tell AI what it is for this interaction. “You are an experienced internal auditor reviewing a procurement function.” This frames every response around the perspective you need.

Task: be specific about what you want. Not “help me with my audit finding” but “draft an audit finding using the observation below.”

Context: give it everything relevant. The industry, the organisation type, the regulatory environment, the specific process, the prior findings. AI works with what you give it. The more you give, the better it works.

Format: tell it how you want the output structured. IIA format. Bullet points. Executive summary language. Table. Whatever fits your working paper or report.

A prompt that follows this structure takes about ninety seconds longer to write than a vague one. The output is ten times more useful. That trade-off is worth making every time.

What this looks like inside a real audit function

I want to be specific here because “building an AI habit” sounds like something that belongs in a productivity blog, not an audit manual.

What actually happened in practice in a real audit team that went from “AI doesn’t work for us” to using it in every engagement was not a big transformation project. It was a small library.

Ten prompts. That’s it. Ten tested, refined prompts for the ten most common audit tasks, finding drafts, executive summaries, risk brainstorms, control weakness descriptions, management letter language, and a few others. Each one following the Role-Task-Context-Format structure. Each one tested, adjusted, and confirmed to produce useful output.

When a team member needed to draft a finding, they didn’t start from scratch with AI. They opened the prompt library, picked the finding template, filled in their specific observation details, and ran it. The output was consistently good because the prompt was consistently structured.

This is the difference between AI as a novelty and AI as a working tool. Novelty means trying something new every time and getting unpredictable results. A working tool means having tested inputs that produce reliable outputs.

Building that library doesn’t take long. A focused afternoon, working through your most common tasks, testing and refining the prompts that’s enough to get started. Everything after that is iteration.

Is it worth trying again?

If you tried AI six months ago and walked away unimpressed, yes it’s worth another attempt. But not the same attempt.

Don’t open a generic AI tool and ask it a general question. Pick one specific task from your current engagement. Write a prompt that follows the Role-Task-Context-Format structure. Give it real context from your actual work. See what comes back.

The comparison between that experience and your previous one will be significant enough that you’ll understand what I mean about the expectation gap not because I explained it well, but because you felt it yourself.

If you want to skip the trial and error and see exactly how this works on a real audit scenario, live, with real prompts, with honest commentary on what the output looks like and how to review it. I run a free webinar for audit and finance professionals in the MENA region.

It’s sixty minutes. It’s practical. And it starts from the assumption that you’ve already tried AI once and weren’t convinced because that’s the audience I built it for.

If you want help designing AI workflows for your audit team, you can also explore our AI Audit services and AI Consultancy offerings, or book a free consultation.

Cynthia Merhej, CPA, CISA, AAIA

Founder of AIKit LB · Beirut, Lebanon

Cynthia is an AI advisor, auditor, and educator with 15+ years of experience across the Middle East and Eastern Europe. She holds a Master's in Artificial Intelligence and certifications in CPA, CISA, AAIA (Advanced in AI Audit), ISO 9001 & 27001 Lead Auditor, and IT Specialist in AI. Through AIKit LB she helps organizations adopt AI responsibly through governance, audit, and certified training.