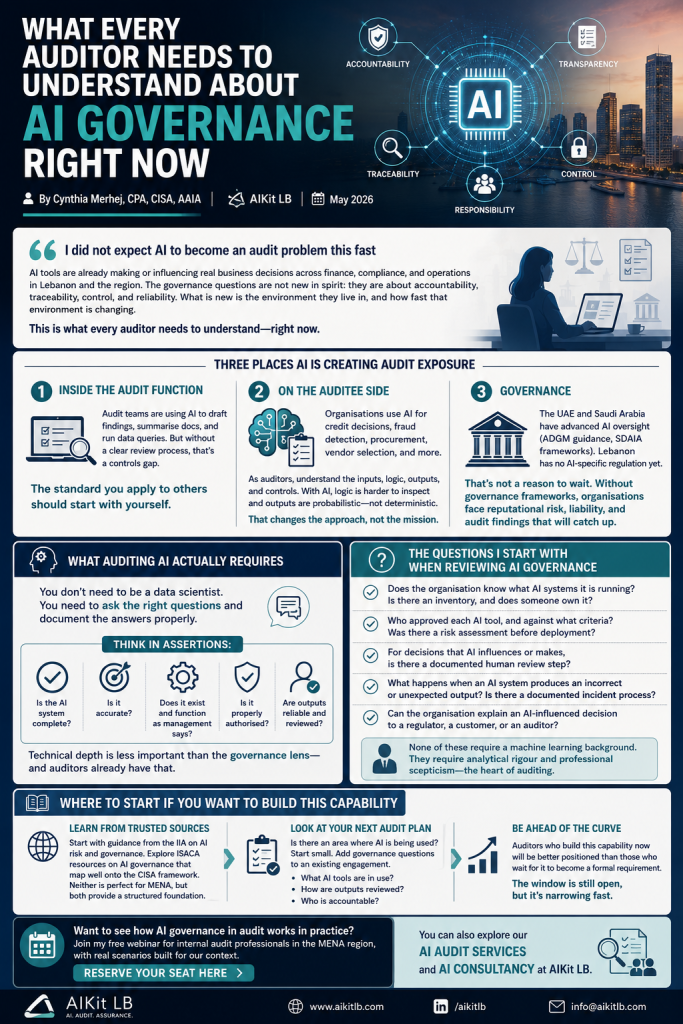

I did not expect AI to become an audit problem this fast

AI governance for auditors used to be a future-tense conversation. When I earned my CISA, AI was barely in the curriculum, only a concept, something futuristic and mostly theoretical. Now, as an AAIA (Advanced in AI Audit), I see how fast that has changed. AI tools are sitting inside finance departments, compliance teams, and operations functions across Lebanon and the region, making or influencing real business decisions every day.

As someone who came up through both financial audit and information systems audit, I find myself in an interesting position. The governance questions that AI raises are not new in spirit. They are about accountability, traceability, control, and reliability. Those are the same questions I have been trained to ask for years. What is new is the environment they live in, and how fast that environment is changing.

I want to share what I am seeing, and what I think audit professionals need to pay attention to right now.

Three places AI is creating audit exposure

The first one is inside the audit function itself. A lot of audit teams are already using AI tools to draft findings, summarise documentation, and run data queries. I use them too. But using AI in your own work without a clear review process is a controls gap. If an AI-generated finding goes into a working paper without proper human review, you have introduced exactly the kind of uncontrolled risk that auditors are supposed to catch in others. The standard you apply to the organisations you audit should start with yourself.

The second is the auditee side. The organisations and departments we audit are increasingly using AI for consequential decisions: credit assessments, fraud detection, procurement approvals, vendor selection. From a CISA perspective, this is not fundamentally different from auditing any automated system. You need to understand the inputs, the logic, the outputs, and the controls around each. The difference is that with AI, the logic is harder to inspect and the outputs are probabilistic, not deterministic. That changes the audit approach but it does not make it impossible.

The third is governance. Some countries in the region have already started formalising AI oversight. The UAE and Saudi Arabia have made the most visible moves, through ADGM guidance and SDAIA frameworks respectively. Lebanon does not yet have AI-specific regulation in place. But that is not a reason to wait. Organisations that have deployed AI tools without governance frameworks are already exposed to reputational risk, liability when outputs are wrong, and audit findings that will eventually catch up with them.

What auditing AI actually requires

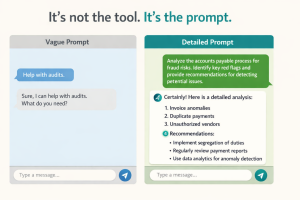

I hear two things from audit colleagues, and they sit at opposite ends. Some say you need to be a data scientist to audit AI properly. You do not. Others say their existing frameworks already cover it. They mostly do not, at least not without significant adaptation.

What you need is the ability to ask the right questions, and the discipline to document the answers properly. As a CPA, I think about this in terms of assertions. Is the AI system complete? Is it accurate? Does it exist and function as management says it does? Is it properly authorised? Those questions translate very directly into an AI audit context.

The technical depth required is something audit professionals already have.

The questions I start with when reviewing AI governance

These are not exhaustive, but they are a solid starting point for any engagement where AI is in scope:

- Does the organisation know what AI systems it is running? Is there an inventory, and does someone own it?

- Who approved each AI tool, and against what criteria? Was there a risk assessment before deployment?

- For decisions that AI influences or makes, is there a documented human review step?

- What happens when an AI system produces an incorrect or unexpected output? Is there a documented incident process?

- Can the organisation explain an AI-influenced decision to a regulator, a customer, or an auditor?

None of these require a machine learning background to ask. They require the same analytical rigour and professional scepticism that good auditors apply to everything else.

Where to start if you want to build this capability

Start with the IIA. They have published guidance on AI risk and governance that does not require technical depth to apply. ISACA, given the CISA framework, has also produced resources specifically on AI governance that map well onto what IT auditors already know. Neither is perfect for the MENA context, but both give you a structured foundation.

Then look at your next audit plan. Is there an area where you know AI is being used? Start there, not with a full AI audit, but by adding a handful of governance questions to an existing engagement. What AI tools are in use here? How are the outputs reviewed? Who is accountable?

The auditors who build this capability now will be better positioned than those who wait for it to become a formal requirement. That window is still open, but it is narrowing faster than most people in the profession realise.

If you want to see how AI governance in audit works in practice, with real scenarios built for the MENA context, I run a free webinar specifically for internal audit professionals in the region. You can reserve your seat here.

You can also explore our AI Audit services and AI Consultancy offerings, or book a free consultation to discuss your specific AI governance needs.

Cynthia Merhej, CPA, CISA, AAIA

Founder of AIKit LB · Beirut, Lebanon

Cynthia is an AI advisor, auditor, and educator with 15+ years of experience across the Middle East and Eastern Europe. She holds a Master's in Artificial Intelligence and certifications in CPA, CISA, AAIA (Advanced in AI Audit), ISO 9001 & 27001 Lead Auditor, and IT Specialist in AI. Through AIKit LB she helps organizations adopt AI responsibly through governance, audit, and certified training.