I Work in Internal Audit and I’ve Been Using AI for a Year. Here’s What I Actually Found.

Nobody prepared us for this

AI in internal audit is no longer a hypothetical conversation. A few years ago, the biggest debate in internal audit was whether data analytics was worth the investment. Whether we had the skills for it. Whether the CAE would sign off on the budget for a proper tool. That felt like a big shift at the time.

Then AI arrived and it made the analytics debate look like a minor disagreement.

Now we’re talking about AI writing our findings, summarising hundreds of pages of documentation in minutes, flagging risks we might have missed, and doing in twenty seconds what used to take an afternoon. The headlines moved fast. Faster, honestly, than most of us could process.

And that’s the problem. Because between the breathless LinkedIn posts about “AI replacing auditors” (which often miss the more important conversation about AI governance for auditors) and the vendor demos that make everything look effortless, most audit professionals I talk to are stuck somewhere in the middle. Curious, but cautious. Interested, but not sure where to start. Wondering whether any of this actually works in a real audit environment not a demo, not a case study, but actual day-to-day work.

I work in internal audit. I’ve been using AI tools seriously for over a year now, inside a real audit function. This article is not a vendor pitch, not a tool review, and not a theoretical framework. It’s just what I actually found, the parts that work, the parts that don’t, and what I’d tell a fellow auditor who’s thinking about trying it.

The moment it clicked for me

It was late. I had a deadline the next morning and a finding that still needed to be written. Not just drafted, written properly, with condition, criteria, cause, effect, and a recommendation that didn’t sound like it came from a template.

I’d been staring at my notes for twenty minutes. I knew what the issue was. I’d seen it during fieldwork, I’d documented it, I had everything I needed. But translating all of that into clean, professional finding language at 11pm after a long day is a different skill entirely and that night, I didn’t have it in me.

So I opened an AI tool, gave it my raw observation notes and the context about the process we’d audited, and asked it to draft a finding in IIA format.

What came back wasn’t perfect. It needed editing. The tone in one paragraph was slightly off, and it had made one assumption about the root cause that I needed to correct. But the structure was there. The professional language was there. The hardest part, getting something onto the page, was done.

I spent fifteen minutes editing instead of ninety minutes writing from scratch. The finding was better than it would have been if I’d forced it out at midnight on my own.

That was the moment it stopped being a curiosity and started being part of how I work.

Where AI genuinely saves time in audit work

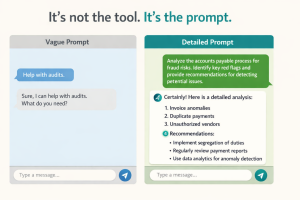

Let me be specific, because “AI saves time” is the kind of vague claim that sounds good and means nothing. Here’s where I’ve actually seen it make a real difference.

Report writing is the obvious one, but it’s worth being precise about why. AI doesn’t write your report for you. What it does is take your rough observations, the bullet points you jotted down during fieldwork, the notes you made after management discussions and turn them into professional language. Give it your raw material and tell it the format you need. It will give you a draft. You review it, correct it, add your judgement. The thinking is still yours. The drafting is shared.

The same applies to executive summaries. If you’ve ever stared at a completed audit report wondering how to distil twelve pages into half a page for the board, AI is genuinely useful here. Paste the report, ask for a summary written for a non-technical audience, and you’ll have a solid starting point in under a minute.

Audit planning is where I’ve seen people underestimate it most. AI is remarkably good at helping you think through the risk landscape of an unfamiliar area. If you’re about to audit a process or a department you haven’t touched before, ask AI to describe the key risks typically associated with that area, the common control weaknesses, and what an auditor should look for. You’ll get a useful starting list. You’ll still need to validate it against your specific context but as a first-pass risk brainstorm, it’s faster than anything else.

Research is perhaps the least glamorous but most consistently useful application. Regulatory summaries, sector risk briefings, explanations of complex accounting standards in plain language, AI handles all of this well. It’s like having a research assistant who never gets tired and has read everything.

Where I've seen it go wrong

I’d be doing you a disservice if I only told you the good parts.

The most common problem is trusting AI output without checking it. AI tools can be confidently wrong. They’ll produce a finding that looks completely professional, cites what appears to be a relevant standard, and contains a factual error buried in paragraph three. In most industries, that’s an embarrassment. In audit, it’s a professional liability. Strong AI governance practices for auditors are what close this gap. Every AI output that goes into a working paper or a report needs a human review. Not a skim but a proper check.

The second issue is asking AI to make judgement calls it cannot make. Is this control effective? Is this finding material? Should this go in the report or be a verbal observation? These are questions that require professional scepticism, knowledge of your organisation, and years of experience. AI doesn’t have any of those things. When you ask it to make those calls, you’ll get an answer but it won’t be a reliable one. Use AI for drafting and research. Keep the judgement.

The third thing I see regularly is copy-pasting AI output directly into working papers without any indication it was AI-assisted. Aside from the quality risk, this raises real questions about documentation standards and, depending on your organisation’s AI policy, potentially compliance ones too. Be transparent about how you’re using these tools. Build a simple review step into your process. It protects you and it protects the quality of the work.

What this means for auditors in Lebanon and across MENA

Most of the AI content written for auditors is written for a US or UK context. The regulatory references are different. The industry examples are different. The cultural and organisational context is completely different.

If you’re working in Lebanon, you’re operating under the Banking Control Commission’s guidelines, BdL circulars, FATF compliance requirements, and in many cases the Banking Secrecy Law, a framework that has no direct equivalent in Western audit literature. Generic AI content doesn’t account for any of this.

What I’ve found is that AI tools work better when you bring the context to them. Don’t ask a generic question and expect a Lebanon-specific answer. Tell the tool what regulatory environment you’re operating in, what sector your auditee is in, and what standards you’re applying. The output becomes significantly more relevant.

This is also why I’m cautious about audit teams in the region who adopt AI tools wholesale based on recommendations from international firms or global training programmes. The principles are transferable. The specifics, the prompts, the context, the quality checks,need to be built for your environment. That work is worth doing. But it’s work that someone needs to do, and it doesn’t happen automatically.

Where to start: one task, this week

If you’ve read this far and you’re thinking about trying AI in your next engagement, here’s my honest advice: start with one task, and start small.

The lowest-risk entry point is your next audit finding. Write it yourself first, the way you normally would. Then take your draft to an AI tool and ask it to improve the language, sharpen the recommendation, or restructure it in IIA format. Compare the two versions.

That comparison alone will teach you more about how AI works in an audit context than any guide or training session. You’ll see where it improves on your draft, where it goes wrong, and what kind of instructions produce better results. You’ll also notice that reviewing AI output is a different skill from writing from scratch and it’s a skill worth developing.

If you want to see this done live, on a real audit scenario, with real prompts, with honest commentary about what works and what doesn’t, I run a free webinar specifically for internal audit professionals in the MENA region.

No tech background needed. No tools to buy. Just an honest hour that might change how you think about what’s possible in your work.

You can also explore our AI Audit services and AI Consultancy offerings, or book a free consultation to discuss bringing AI into your internal audit function.

Cynthia Merhej, CPA, CISA, AAIA

Founder of AIKit LB · Beirut, Lebanon

Cynthia is an AI advisor, auditor, and educator with 15+ years of experience across the Middle East and Eastern Europe. She holds a Master's in Artificial Intelligence and certifications in CPA, CISA, AAIA (Advanced in AI Audit), ISO 9001 & 27001 Lead Auditor, and IT Specialist in AI. Through AIKit LB she helps organizations adopt AI responsibly through governance, audit, and certified training.